Making ARdeck @ WhatTheHack

This past weekend, I participated in a virtual 24-hour iOS hackathon called WhatTheHack. At the conclusion, we created an app called ARdeck, a way to use gestures to control the popular streaming software OBS.

It was a lot of fun to get my hands dirty and deliver a functioning app in a short period of time. Here’s a bit of behind-the-scenes on the hackathon. If you’d rather read this but in tweet form, check out the live tweet thread here.

Discovering WhatTheHack and finding a team

Lately I have been getting involved in the iOS community as a way to expand my skills in mobile development (even though I love my work on backend and frontend development). I saw a tweet about the hackathon and figured I’d jump in the deep end.

I didn’t have a team and figured I’d just work solo, but I saw a mutual follower Sam was looking for a teammate so I reached out. We found another pair of developers Ezra and Nedim to team up with. We had a squad.

Coming up with an idea

A hackathon is a great opportunity to work with technologies you might be interested in but haven’t yet been able to put to use. We all had an interest in incorporating Apple’s ARKit and Vision frameworks in some way.

We started jotting down ideas and felt we came up with a lot of cool ones, but nothing that stood out. They were safe ideas but nothing that would push our limits.

Mid-conversation, I remember asking “what if we could make a StreamDeck but with an AR/ML spin?”

At that moment, everyone paused and we had that “a-ha” where we realized it was the right idea: an app where you can use gestures to control your stream.

Making a game plan

So now that we had an idea, we thought about how we could effectively deliver in the 24 hour timeframe. It was clear we needed to have a stable architecture and design to deliver something this sophisticated.

For the architecture, we agreed to work in the UIKit framework (instead of the newer SwiftUI) due to the team’s familiarity with it. We knew that we would need to get familiar with ARKit and Vision, as well as work on a gesture data set. Lastly, we knew we needed to figure out how to remotely control OBS from the app (it later turned out this would involved WebSockets).

I took the lead on product design since I had more experience in SwiftUI than UIKit, but still knew what to expect of the framework. Nedim took on the WebSockets functionality, which I assisted with since I’ve done production implementations of WebSocket services before. Sam and Ezra took on ARKit and Vision, as well as ML data set training/validation of gestures to recognize.

We had a game plan.

Executing the game plan

Once the hackathon officially started, we were ready to start implementing the app… but knew we needed a codename. Given its DNA of AR and StreamDeck, we decided to call it ARdeck.

Once we had a name, our goal was to divide and conquer and iterate quickly. All of us were in separate timezones, so we had to coordinate our schedules to allow for us to hand off work effectively.

Sam knew that the most time consuming part would be training the ML model of gestures, so he got to work taking 300+ pictures of various hand positions for the few gestures we planned on supporting, and running them through an image recognition and classification model on Azure.

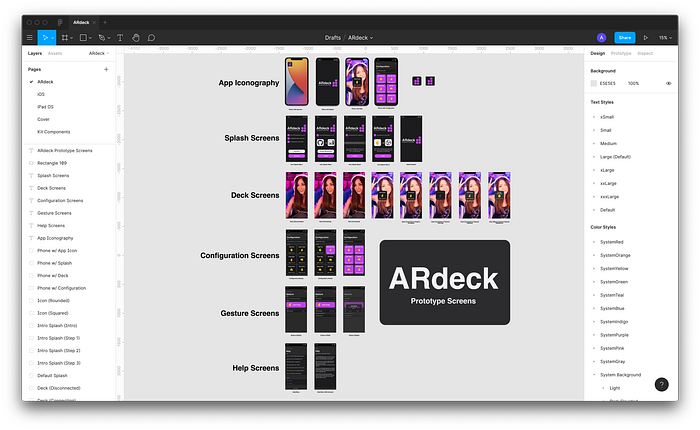

While he worked on that, I finalized the product design in Figma so that the team would have to spend less time thinking through the UX and design.

Nedim found an open source WebSocket plugin for OBS and we created an abstraction to invoke when sending commands to OBS. Once we managed to get some non-UI code controlling OBS, we knew we were ready to start looping in the others.

In the meantime, Ezra and Sam were hard at work getting the project’s UI implemented, plus incorporating some of the AR/ML boilerplate. Nedim and I came in afterward and helped facilitate the completion of the app by tying the two parts together.

Much of the time was spent doing pair programming/debugging — we realized it was the best way to tackle problems quickly, reduce fatigue and avoid large code conflicts/drift given the brevity of the hackathon. It paid off because we got nearly all screens implemented with their baseline functionality — a huge milestone.

Presenting the final product

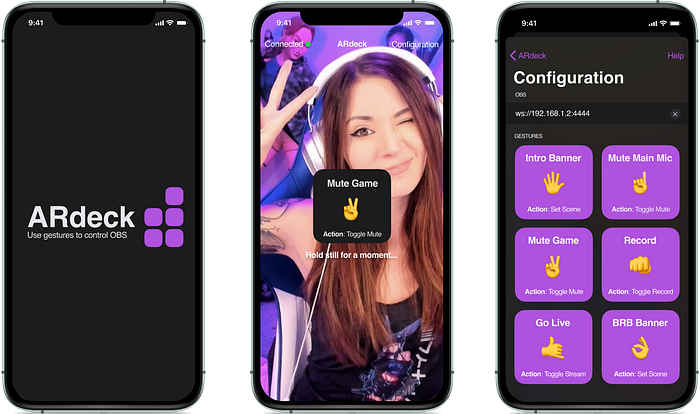

24 hours after starting, we managed to get a functional version of the app out the door. We presented the final product to the entire hackathon, along with a live demo. Here’s some product shots:

We were so thrilled to win an award for the Best Product category! Check out the award and demo:

Lessons learned and next steps

Even with all the awesome outcomes of the hackathon, we definitely learned some lessons worth noting…

- If you’re collaborating, settle your Git strategy as early as possible. We spent some critical time doing some Git sorcery because we didn’t think about some conflicts that would arise.

- Unfortunately, we had to fall back to an existing ML model instead of creating our own. This meant that all the photos that Sam took did not get put to use. It was good on him for recognizing the problems early and adapting to them.

- We should have settled out architecture a bit more formally. We fell victim to some of the “rush” that comes with a hackathon, and as a result, we didn’t organize some logic in a way that made things easier. Often some good forethought in app architecture pays dividends as you build out sophisticated logic.

- Given the time zone differences, we probably could have designed our rotation a bit better to avoid fatigue and also ensure we weren’t playing a waiting game. It didn’t come up much, but it had a very subtle effect on our productivity.

- We were very agile and able to reduce the scope of work when possible. The product design was definitely demanding for a 24 hour turnaround. So when the team recognized inherent complexity somewhere, we talked it out, proposed a design change, and moved forward with it. This I think was a key part of our success in delivering a simple yet impressive demo app.

As far as next steps, we know that we want to do some things to move ARdeck forward in some form. Here’s just a few of them:

- Convert it from UIKit to SwiftUI: A lot of the visual components are far more straightforward in SwiftUI, which will help keep implementation maintainable.

- Implement the WebSockets functionality fully using first party libraries: Instead of using a third party library for WebSockets, we want to implement it ourselves and stabilize the control flow for efficiency.

- Use our own ML models and support varying visual conditions: We should train a model catered to our specific needs, as well as be more relaxed on distractions in the background of a camera’s view.

I really enjoyed working with people I had just met to bring something like this to life. I’m looking forward to future hackathons and other ways to improve my skills both as an engineer and an architect.